Part 1. Why API Costs Hit $10 a Day — Server Design and Model Strategy

Series: Can AI Agents Actually Run Business Operations? — Part 1

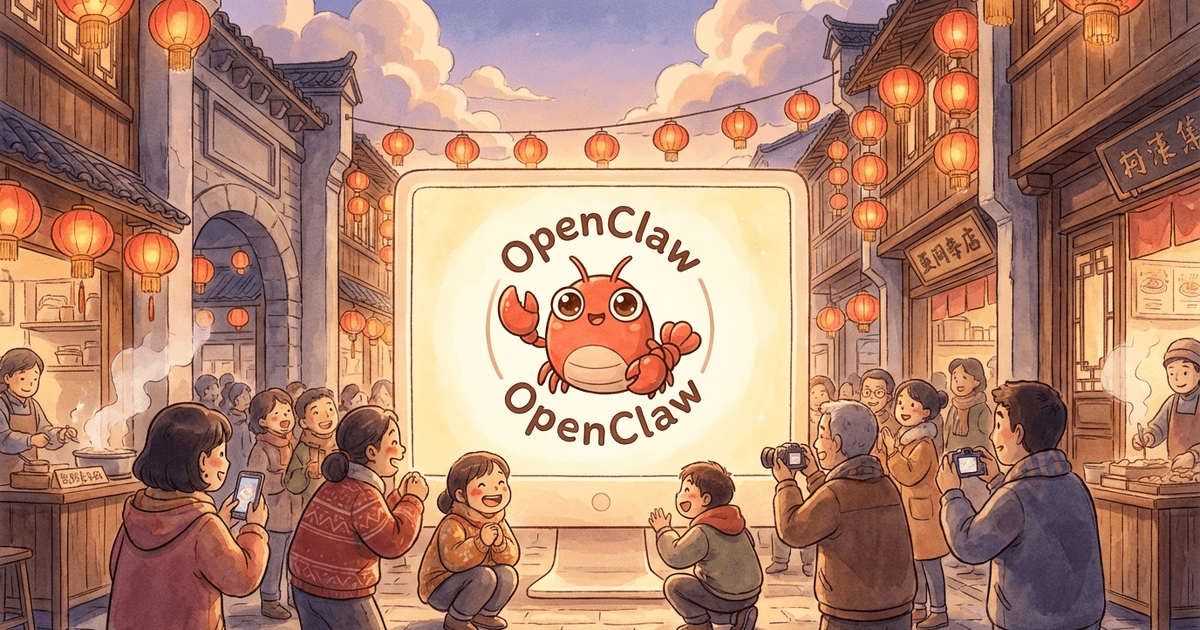

OpenClaw has been getting a lot of attention as an AI agent platform since late 2025. But how usable is it in real business work? And how does it hold up from a security standpoint?

In this series, I share what I learned from using OpenClaw on an actual client engagement — rough edges, failures, and course corrections included. I am not an OpenClaw expert; this is one engineer working through the real problems. I hope it helps people who are evaluating OpenClaw or similar AI agent platforms for adoption, as a kind of “here is what to watch out for” reference.

In this post (Part 1), I walk through why my API bill came in 5-10x higher than expected on day one. The traps include: OpenClaw does not auto-compress context, Cron and HEARTBEAT can run the same task twice, and a 3-tier model strategy (including a local LLM) brings the numbers back under control.

For the overall project summary, please see the project page.

1. Server Selection and Base Setup

We chose Hetzner Cloud for a 24/7 agent server.

| Item | Value |

|---|---|

| Location | Helsinki (hel1) |

| OS | Ubuntu 24.04 |

| Spec | 4vCPU / 16GB RAM / 150GB SSD |

| Monthly | about €12 |

Latency is not a big deal for long-running agents, so the European region was fine. Cloud was the right fit because procuring dedicated hardware was not realistic for a client project, we needed a sandbox separated from the internal network, and 24/7 uptime was a requirement.

2. OpenClaw Installation — Things to Watch

What --accept-risk actually means

OpenClaw onboarding runs like this:

npm install -g openclaw@latest

openclaw onboard --install-daemon --non-interactive --accept-riskIf you leave out --accept-risk, you get:

Non-interactive setup requires explicit risk acknowledgement.OpenClaw runs with full host access, so it asks for explicit risk acknowledgement. The agent can read and write files and run arbitrary commands. You should understand this before deciding to install it.

Browser settings on a server without GUI

On a GUI-less server running as root, two settings are needed:

openclaw config set browser.noSandbox true

openclaw config set browser.headless trueYou also need to install Google Chrome Stable in advance:

wget -q https://dl.google.com/linux/direct/google-chrome-stable_current_amd64.deb -O /tmp/chrome.deb

apt-get install -y /tmp/chrome.debExposing the web dashboard

OpenClaw has a built-in web management UI. To access it from outside, I used sslip.io to turn the server IP into a domain, then set up Nginx as a reverse proxy with Let’s Encrypt.

One setting to be careful about: OpenClaw requires device pairing as a brute-force protection. For external access, this has to be disabled:

openclaw config set gateway.controlUi.dangerouslyDisableDeviceAuth trueThe name has dangerously in it for a reason. I compensated with three layers of protection: Nginx Basic Auth, a gateway token, and HTTPS.

3. Security Settings — Do Them First

If you run OpenClaw on a cloud server, please do these on day one.

- Full host access:

--accept-riskis literal. The agent can read/write files and run commands freely. .envfile permissions: The file is created with644(world-readable) by default. It contains API keys, sochmod 600is required.- Template files: Be careful not to accidentally write real API keys into git-tracked template files like

.env.example.

chmod 600 /root/.openclaw/.envIt is easy to skip these while you are focused on getting things to work. I would recommend doing them before anything else.

4. Cost Surprise — 5-10x Over Budget

The day after setup, I checked OpenClaw’s /usage dashboard and found costs were much higher than expected.

- Expected: $1-2/day ($30-60/month)

- Actual: $8-10/day ($240-300/month)

I broke down the causes and found four issues stacking up.

Cause 1: Context token bloat (the biggest factor)

The main session had grown to about 310,000 tokens.

agent:main:main │ 310k/200k (155%)OpenClaw does not auto-compress conversation history. Unlike Claude Code, everything from setup logs and error messages stays. Every message sends the full 310K tokens to the API again.

| State | Per message | Per 100 messages |

|---|---|---|

| 310K tokens | $0.023 | $2.30 |

| 40K tokens (after reset) | $0.003 | $0.30 |

Even if you are using a cheap model, a large context will eat that advantage.

Cause 2: Cron jobs running all the time

A monitoring cron running every 30 minutes was hitting the API 48 times a day.

Cause 3: Cron and HEARTBEAT both running

This one is the hardest to notice. OpenClaw has two scheduling mechanisms:

| Mechanism | Session | How to stop |

|---|---|---|

| Cron | Isolated session | Manage with openclaw cron |

| HEARTBEAT | Inside main session | Edit HEARTBEAT.md |

If you stop cron but leave the same task in HEARTBEAT.md, it keeps running. In practice, after I had disabled all crons, web_search calls continued.

Cause 4: Heavy use of web_search

web_search: 186 calls

web_fetch: 33 callsThe HEARTBEAT auto-processing was triggering web searches more than I realized.

5. Countermeasures — Compress Sessions, Review Scheduled Tasks

Immediate steps and their effect:

| Action | Effect |

|---|---|

Session compression (/reset) | 310K → 21K tokens (93% reduction) |

| Disable all cron jobs | Background API calls went to zero |

| Remove monitoring tasks from HEARTBEAT | Stopped the 30-minute auto-search |

Before vs. after:

| Metric | Before | After |

|---|---|---|

| Main session tokens | 310,000 | 21,000 |

| Background API calls | 48/day | 0/day |

| Estimated monthly cost | $240-300 | $10-20 |

6. Local LLM — Ollama + Gemma3 4B

To fundamentally solve the 24/7 cron cost problem (which would have been $50-100/month on its own), I added a local LLM.

Model selection

| Model | Result | Note |

|---|---|---|

| Qwen3 4B | Not adopted | Thinking mode always on. A 3-line answer took 2,500 tokens and 3 minutes |

| Gemma3 4B | Adopted | The same task took 60 tokens and 3 seconds |

Qwen3’s chat template force-injects a <think> tag. On CPU inference, this slows things down significantly. I tried /no_think and a custom Modelfile, but the effect was limited.

In my view, Thinking-forced models are not a good fit for CPU-only inference environments.

Server resource usage

Hetzner: 4vCPU (AMD EPYC) / 16GB RAM / no GPU

Gemma3 4B loaded:

Disk: 3.3 GB

RAM: 4.2 GB (CPU inference)

Free RAM: 10 GB (plenty of room for OpenClaw + OS)

Speed: 14-15 tok/sA 16GB-RAM server is enough. Even without a GPU, response speed is workable for routine tasks.

7. The 3-Tier Model Strategy

Based on this experience, we settled on a 3-tier strategy that matches model tier to task characteristics.

| Tier | Model | Use | Cost band |

|---|---|---|---|

| Tier 1 | Claude Sonnet / Opus | Code generation, deep analysis, inconsistency detection | medium-high |

| Tier 2 | Gemini 3 Flash | Orchestration, web summarization, HTML extraction | low |

| Tier 3 | Gemma3 4B (local) | Heartbeat response, routine processing | $0 |

Execution strategy

- Code-First: Convert rule checks into Python code and run them without an LLM (zero execution cost)

- Smart Routing: Low-load tasks go to Gemini Flash, heavy tasks go to Claude Sonnet

- Local Preference: Keep routine work on Ollama + Gemma3

- Code generation is Sonnet only: Gemini Flash tends to fall back to hardcoding (details in Part 2), so it is not suitable for code generation

Let OpenClaw configure itself

Rather than editing config files directly from Claude Code, it worked better to prompt OpenClaw itself and let it update its own settings.

openclaw agent --agent main --message 'Please change the default model to google/gemini-3-flash-preview.

Use models based on task difficulty:

- Normal: google/gemini-3-flash-preview

- Medium: anthropic/claude-sonnet-4-6

- Hard: anthropic/claude-opus-4-6'OpenClaw updated its own default model and recorded the routing strategy in SOUL.md.

8. Takeaways

- OpenClaw does not auto-compress context. Unlike Claude Code, it grows without bound unless you

/resetmanually or configure a rule. - Cron and HEARTBEAT are different mechanisms. If you stop one, always check the other.

- Cheap models do not save you from large contexts. Gemini Flash is low-cost per token, but if you send 310K tokens every message, it is not cheap anymore. Cost = price × tokens × calls — you have to evaluate all three.

- Run

/resetright after setup. Setup sessions accumulate a lot of trial-and-error context that you do not need going forward. - Avoid Thinking-forced models on CPU inference. They are fine on GPU, but unusable on CPU-only servers.

- Check

/usagedaily. A one-day delay in noticing an anomaly can mean a $10 difference.

9. Post-Setup Checklist

- Check cost on

/usage(establish a baseline) - Check token count per session with

openclaw status - Run

openclaw cron listto find unneeded crons - Review

HEARTBEAT.mdfor unnecessary tasks - Verify

.envhas600permissions - Run

/resetafter setup is complete - Set a monthly spend cap in your API provider dashboard

- Browser settings:

headless: true+noSandbox: true

In Part 2, I will share an experiment where we gave AI models only the rule specification (in Japanese text) and asked them to generate Python check code on their own. Comparing three models, we found that Gemini Flash had been hardcoding the expected answers.

Related Posts

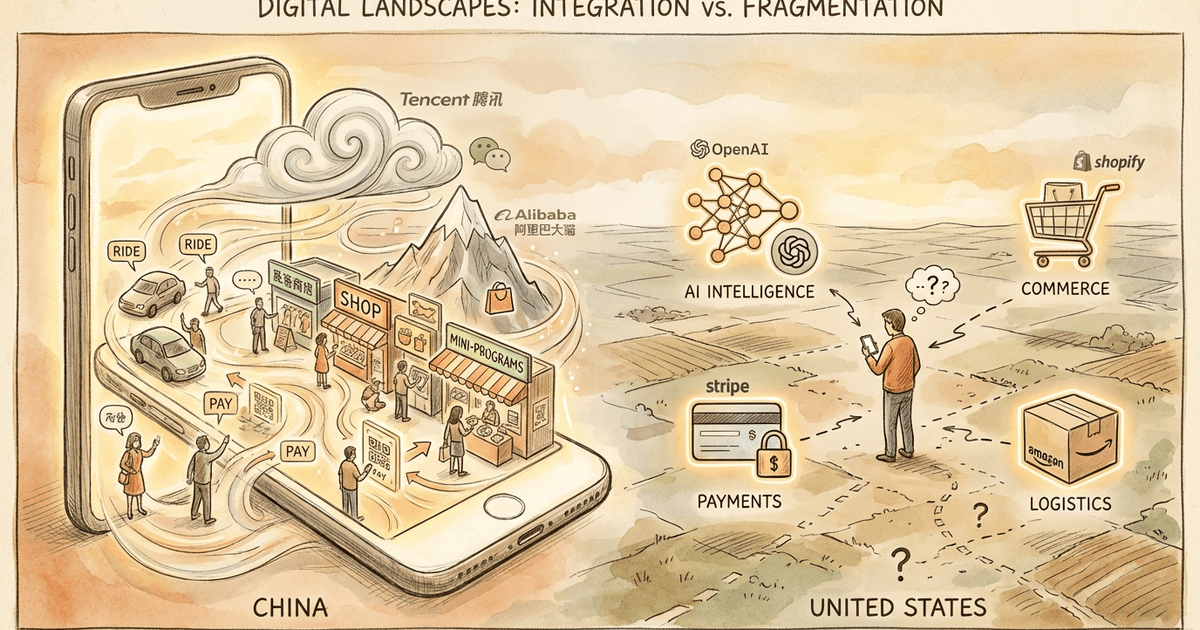

China's AI Now: OpenClaw Fever and How Alibaba, Tencent, and ByteDance Are Racing to Monetize AI

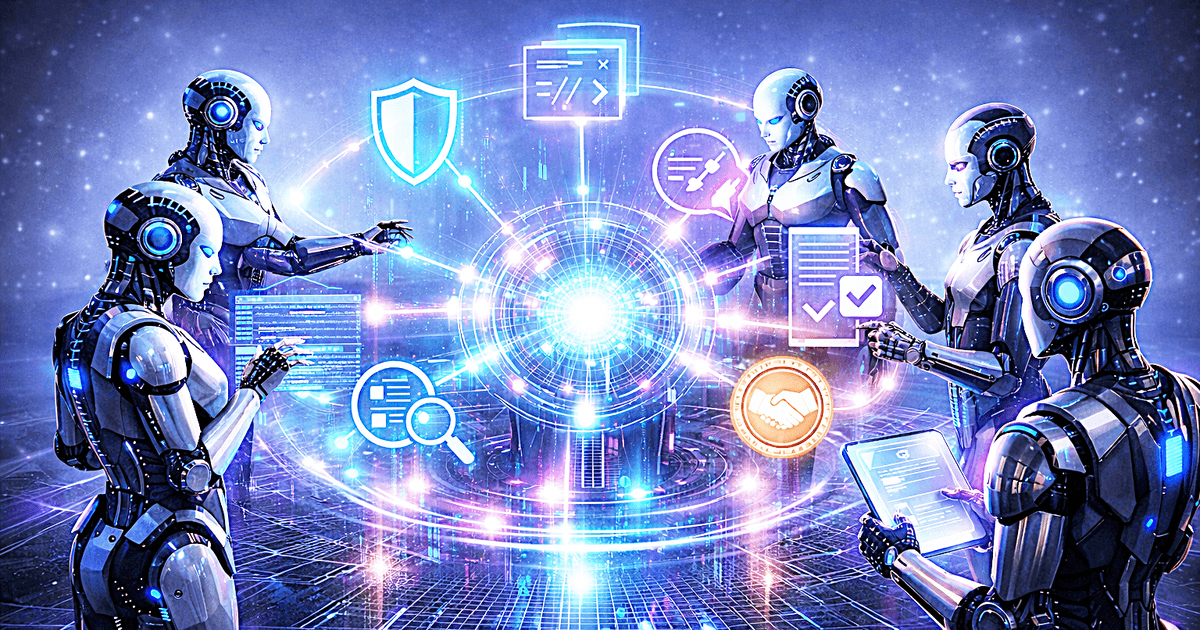

The New World Driven by Multi-AI Agents — When AIs Review, Complement, and Negotiate with Each Other